David Sacks, the new co-chair of the White House’s Council of Advisors on Science and Technology, blasted Anthropic’s “regulatory capture strategy,” warning that the AI giant wants to significantly hamstring the industry.

David Sacks, the new co-chair of the White House’s Council of Advisors on Science and Technology, blasted Anthropic’s “regulatory capture strategy,” warning that the AI giant wants to significantly hamstring the industry.

“My issues with them in the past were related to what I have called their regulatory capture strategy. They do want a permissioning regime in Washington for chips and models, meaning you have to go to Washington to get permission to release new models or to sell GPUs anywhere in the world. I think that's excessively heavy-handed,” Sacks said on the March 27 edition of the All-In podcast.

The former White House AI Czar said Anthropic might have been motivated by ideological reasons rather than a desire to freeze out future competitors.

“Regardless, I do think it is a form of regulatory capture because it plays into the hands of the big companies and creates moats that new entrants will not be able to overcome. So I have, let's say, philosophical objection to that part of it,” Sacks added.

Previously, Sacks went after Anthropic for hiring the “Biden AI team,” suggesting alignment between Anthropic’s support for AI regulations and the Biden administration’s aggressive approach. Sacks also called out Anthropic over these hires after venture capitalist Marc Andreessen said that the Biden administration planned to block AI startups and limit the industry to two or three companies tightly controlled by the federal government.

Notably, Anthropic praised the Biden administration for creating an AI Safety Institute (AISI) in the National Institute of Science & Technology (NIST), a federal body devoted to pushing restrictions on AI.

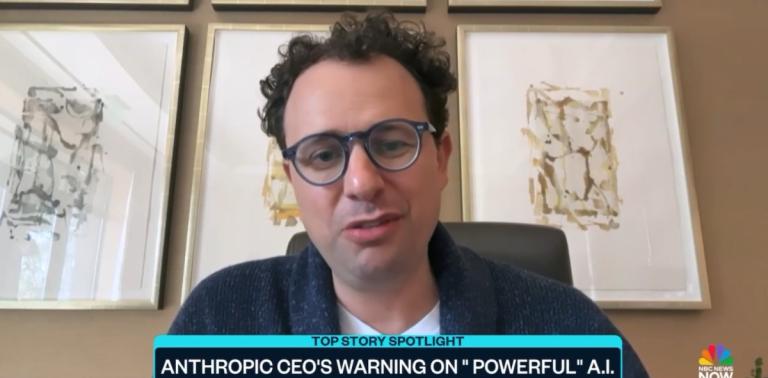

Anthropic has also supported state regulations of AI, in addition to donating $20 million to a political action committee that backs AI restrictions. Anthropic CEO and Co-Founder Dario Amodei himself has complained that the government hasn’t regulated AI enough because there isn’t sufficient “public awareness” of AI risks.

Recently, Anthropic Co-Founder and Head of Policy Jack Clark suggested that the AI giants' current approach to research could lead to AI regulation during the March 27 edition of Plain English with Derek Thompson.

The podcast host, Derek Thompson, suggested that Anthropic’s approach to safety would be almost useless unless widely adopted by other companies and asked Clark how he planned to “globalize” Anthropic’s approach.

In response, Clark made clear that Anthropic was putting out research on its approach for government officials and AI companies and that what gets measured can be more easily managed.

Conservatives are under attack! Contact your representatives and demand that Big Tech be held to account to mirror the First Amendment while providing transparency, clarity on hate speech and equal footing for conservatives. If you have been censored, contact us using CensorTrack’s contact form, annd help us hold Big Tech accountable.